For code that is important to you, for code worth reading and not merely executing, for code whose history and references are meaningful and whose authors you'll *want* to know, interact and work with, there is now VaMP. For all other code, there are all the other versioning systems and if those fully satisfy your needs, then enjoy them as they are and good luck to you.

VaMP provides a wide range of support for coding as an intellectual activity, from verifiable, indelible and accumulated history, authorship, readership and investment of trust to smooth exploration, pooling of resources and collaboration that may be as deep and intricate or as superficial and plain as those involved care to make it at each step of the way. All code and work in general is thus securely anchored in its context at all stages - the provenience as well as the accumulated history matters for any versioned product, whether code or anything else. There are hard, cryptographic guarantees on the full state of the codebase at any version but the value and meaning of these is derived, as it should be, from the expertise and reputation of those that issue the guarantees in the first place. And on this support of visible, verifiable and indelible history of activity, one's own reputation can be built and continuously accumulated so that no useful effort need ever be lost and expertise as well as usefulness can be more easily identified and rewarded at every stage.

For the concrete implementation of the above, VaMP relies on my previous regrind and updated implementation of old Unix's diff and patch as well as the full cryptographic stack that I had implemented for Eulora (a first version of which you can find discussed in the EuCrypt category). VaMP is based on Mircea Popescu's design, hence the MP in the name. And since it was the infuriating broken state by design of the V tool that made me start the work on this in the first place 1, I'm preserving it as the first letter in VaMP's name, to acknowledge that the experience of using V provided indeed the perfect help and motivation to finally move away from all its misery.

As to how VaMP works in practice, the short of it is - very smoothly and extremely usefully indeed! It's a pleasure to have it produce patches and signatures, check signatures, find and apply the relevant patches for any given task and even create essentially "branches" for exploring some changes to the code, all from one single command line tool and without the need for any additional scripts and hacked "a" "b" or hidden directories or whatnot. As a little but very helpful bonus on the side, it's even more satisfying to have it quickly check even a large legacy codebase and spit out neatly listed any and all broken bits and pieces that have to be fixed as a minimum requirement for the pile to be even considered worth looking at by a human eye. If you are curious, such broken pieces include all empty files and empty directories but also duplicate files and broken format files. And if you wonder "but how can it create the first patch, if it won't accept an empty directory as input", then rest assured that it's possible and otherwise keep wondering until you figure it out - it's good mental exercise! Or maybe start interacting and doing enough useful things so you get to the point where you may actually use VaMP and then it will all fall in place gradually and naturally anyway.

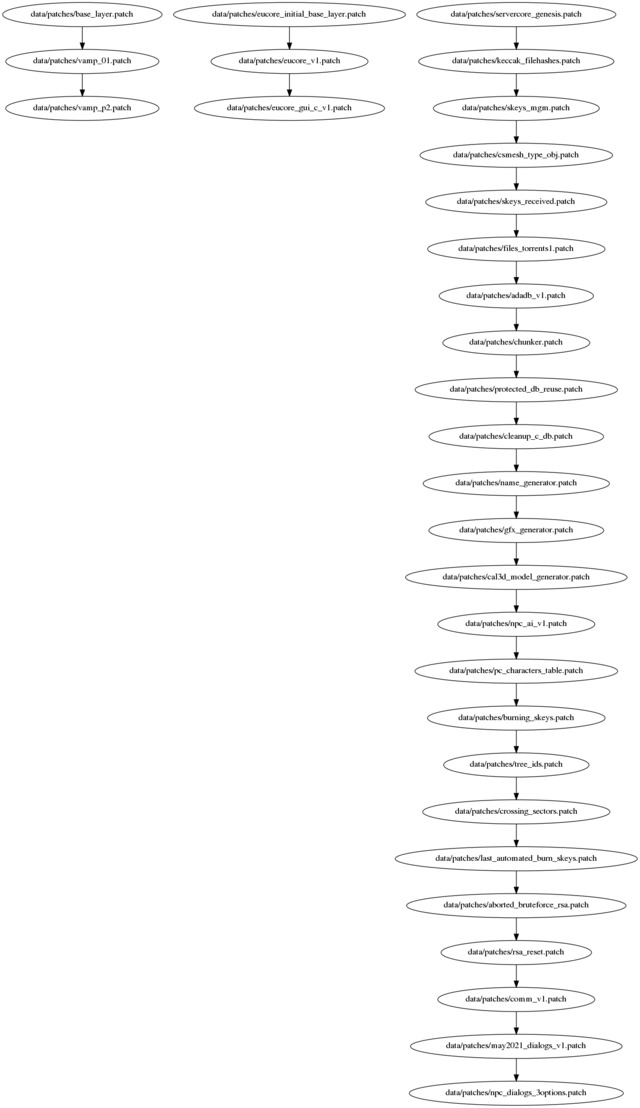

As a possible hint for the above mystery and to give one illustration of use, at least, note that VaMP can and happily does find precisely and exactly the relevant patches for a given task even out of a large pile of patches that might be related or unrelated to any degree. This is not by accident but a direct consequence of the fact that VaMP is by design a tool meant for real-life scenarios (these tend to involve people working on more than one project at times, go figure!) and a tool that works *for* you, not against you. As such, far from requiring that you mould yourself to fit computers' limitations 2 what VaMP does is to harness the computer's resources for your benefit. In this specific case, what this means is that VaMP can indeed handle any pile of patches and never chokes on them no matter how related or unrelated they might be. It works correctly with any set of patches just as it works correctly with any graph that a pile of patches might make - the meaning of it all is very clearly, strictly and fully defined at all times so that one can even get a correct snapshot of *all* their codebases at the same time, shown side by side as either independent or connected trees (or even graphs, gasp!), as the case might be. Here's just one small example of the simplest sort (unconnected trees), from some of the initial test-uses (and yes, the first tree on the left is the one of VaMP itself):

In short and in conclusion, with VaMP, coding can be both fun and intellectually satisfying, once again. As it always tends to happen with any activity - it can become a pleasant thing to do indeed, but if and only if you have the correct tools and are working with the right people. And the two are more related than you think!

- In the end it didn't take 3 weeks but 3 months, all in all and even *all* considered (and there is, sadly, a lot more considered in there than was initially the case; such is life). To put this into perspective though, do note that it took as long as 6 years for v to not get anywhere past its broken state, it's an achievement of sorts, too.[↩]

- In general, moulding to the computer's limitations happens every time when you have to look for a workaround to still achieve what you were trying to achieve in the first place when you hit some "can't do X" restriction. For example and taken straight from the V experience: you can't change a file back to a previous state because omg, the machine is confused and won't know how to order the patches correctly anymore. While V decrees in such situation that "you can't do that, you are also an idiot for wanting to do it", VaMP instead makes sure that the machine is *never* confused, that everything still works correctly in such a situation as well as in any other that might arise within the scope of the tool and then it lets you do exactly what you want to do, providing also full support to see exactly what you are doing.[↩]

Comments feed: RSS 2.0

Adding here a listing of the sigs dir for the test run illustrated above, see maybe what you can spot in it:

$ stat -c "%s %n" data/sigs/*

1337 data/sigs/aborted_bruteforce_rsa.patch.sig

1337 data/sigs/adadb_v1.patch.sig

1337 data/sigs/base_layer.patch.sig

1337 data/sigs/burning_skeys.patch.sig

1337 data/sigs/cal3d_model_generator.patch.sig

1337 data/sigs/chunker.patch.sig

1337 data/sigs/cleanup_c_db.patch.sig

1337 data/sigs/comm_v1.patch.sig

1337 data/sigs/crossing_sectors.patch.sig

1337 data/sigs/csmesh_type_obj.patch.sig

1337 data/sigs/eucore_gui_v1.patch.sig

1337 data/sigs/eucore_initial_blayer.patch.sig

1337 data/sigs/eucore_v1.patch.sig

1337 data/sigs/files_torrents1.patch.sig

1337 data/sigs/gfx_generator.patch.sig

1337 data/sigs/keccak_filehashes.patch.sig

1337 data/sigs/last_automated_burn_skeys.patch.sig

1337 data/sigs/dialogs_v1.patch.sig

1337 data/sigs/name_generator.patch.sig

1337 data/sigs/npc_ai_v1.patch.sig

1337 data/sigs/npc_dialogs_3options.patch.sig

1337 data/sigs/pc_characters_table.patch.sig

1337 data/sigs/protected_db_reuse.patch.sig

1337 data/sigs/rsa_reset.patch.sig

1337 data/sigs/score_genesis.patch.sig

1337 data/sigs/skeys_mgm.patch.sig

1337 data/sigs/skeys_received.patch.sig

1337 data/sigs/tree_ids.patch.sig

1337 data/sigs/vamp_01.patch.sig

1337 data/sigs/vamp_p2.patch.sig

It was nifty reading a piece of this complexity with most of the links already showing up in ":visited" red. (Of course, merely loading a document and even reading may still be a ways off from understanding.)

The only exceptions were the particular selection parameters of "Enemies", and "The State of the (f)Art" which I figure I caught from another terminal while on the road, but didn't give much attention amid too many of my own things being on fire. Since I sadly didn't comment at the time, I can only note here on the reread: I recall on one hand a reduction of my personal "scary factor" on RSA by the observation that signing is just decryption by another name, and thinking through how that could be. And on the other, some vague (butt)hurt with the characterization of other implementations as unmaintained given my own work on v.pl which solved the worst of the performance problems, albeit not the more numerous silent failure problems; and with the "cyclic graph" problem as having lurked in the shadows given that Will and I had both mentioned it. On that part, it looks like we both gave in a bit too easily to the "problem exists only in yer own broken head" conclusion (perhaps self-deprecation as a cheaper substitute for comprehension?) with him not noticing it affected existing implementations, manifest notwithstanding, and me accepting the "pilot error" interpretation from one who I thought to indeed hold such ideals as "machine must correctly and obediently handle all inputs" or "no wedge states". Also I didn't discover the significant "failing late" part. Unlike Minigame/you this year, I indeed hadn't used the thing in practice enough to feel the effects on my own skin. (I have now; it was exactly this that caused v.pl to silent-splode in my face recently, the culprit being a patch on an abandoned experimental branch interfering with god knows what on the main one.)

Back to the present, I didn't find myself wondering "how can it create the first patch": it seemed obvious enough so I'll leave the mystery for the next to find it. What I did wonder was how a general (non-tree) graph shape could work in any kind of coherence with each edge being a signed patch and "guarantees on the full state", since pre-manifest V does it by dropping the latter and git does it by dropping the former (and quite infuriatingly so, for one trying to follow what's going on in any branch-and-merge oriented git project). Perhaps it will come to me. Are you still using the patch name to identify that full state, at least at the UI level?

"find and apply the relevant patches for any given task" - this is also a bit mysterious; not sure if I just don't know how you mean "task" or there's something deeper.

In MP's spec, "calculate the keccak hash of every line as padded with now()[vi] and of length equal to the longest line seen" - I don't follow that last part at all: the hash output gets lengthened as new lines are seen? That would seem to make the hashing rather pointless, if it's no shorter than the original.

My implementation of VaMP is quite flexible there, meaning that it allows the user to go with the default (which is indeed the length of the longest line in the input files), to pick a specific length (whatever positive number they fancy) or to even skip such fanciness of hashing altogether and just compare the lines directly. (On a re-read of my article above, I notice now that the paragraph on this didn't make it to the published version but at any rate, it's written here now so I won't update the article itself.)

To address the point underlying your question, though: the whole point of using line hashes in VaMP is to improve the speed of a simple comparison that aims to decide whether 2 given lines are identical or not (and is not at all interested in anything more detailed than a binary yes/no answer). This is not exactly nor necessarily equivalent to "having something shorter to compare than the lines themselves" because the comparison proceeds anyway byte by byte and stops at the first byte that is different. Hence, when comparing 2 lines of 100 bytes each that have first 99 bytes identical and only the 100th byte different, the comparison of the lines themselves will *still* take longer than the comparison of their 2 corresponding hashes that might be for instance 200 bytes each but that have only the 1st byte identical and the 2nd byte already different (or any other byte before the 100th, for that matter). In other words, hashes are used for their supposed core benefit, namely their "spreading" effect, not their potentially shorter length - even for very similar lines (such as those in the example above, with only the last character different), their hashes should end up with different bytes on much earlier positions, helping thus the comparison reach its conclusion faster.

Like everywhere else, the approach includes some trade-offs, of course, with the one that can easily hurt most in practice (yes, even noted in actual practice) being not as much the length of the hash in itself at comparison time but the increased overhead of *producing* longer hashes. In other words, the time gained when performing all the required comparisons might end up less than the time added for computing the hashes, if they are too long. The trouble is that "too long" is currently more of an informed guess at best, instead of being something exactly known because despite all the millions of lines of code that have been hashed at some point or another via Unix's diff or git's diff or anything similar, apparently nobody did yet any sort of useful analysis of actual data to provide something reliable on which to base a decision of the sort "x length is optimal". Hence, the only rational approach available currently is to pick an upper limit (which is the length of the longest line in a given file) and allow the user to set otherwise the length as they want it, basically to allow both the sort of data collection + investigation that nobody has yet done *and* whatever trade-off the user decides they prefer at one time or another.

Worth perhaps noting also that producing a patch and otherwise simply producing a list of differences between 2 files or 2 directories are different things from VaMP's point of view and not by accident. While a patch is very useful for its hard guarantees, there is of course a price to pay for these and there are cases when I simply want to have a quick look at what is different (or not) between 2 files/directories without any need for more than that. So VaMP will produce either a patch or just a plain list of differences, as requested and without any trouble, as simple as that.

Well, the question hiding in your statement is quite mysterious itself, bereft of a question mark as it finds itself! *How* exactly is that bit mysterious to you? For some attempted clarification to the "don't know" part that might or might not be a question by itself - I used "task" there as an umbrella term to cover all the various commands that VaMP is already able to handle because there are indeed more than just "patch this on that" or "produce a patch for these 2 inputs". And I avoided on purpose terms that are already stuck with rather narrow meanings/use-cases, such as "checkout" of git's and similars or "press" of v's because neither really fully fits (if it did, there wouldn't be any need for a new tool really) - arguably they'd be more like partial synonyms at best.

File names are really for the user's convenience but totally indifferent from the machine's point of view, basically the exact equivalent of the label on a package - it might accurately reflect what is inside or it might not. Hence, I would rather assume that all users will *prefer* to give meaningful names to their patches but the code certainly doesn't care nor relies on file names at any point and will work just the same with any names for patches or sigs or whatever. For that matter, if you look carefully at the signatures listed in my first comment, you might notice some that don't match the names of any of the patches from the graph shown in the article.

Perhaps this is a bit related in itself to how it came that your work/discussion of v and v.pl failed to be perceived as anywhere near "he took on the maintenance of v.pl" - there's a marked difference between studying something or even trying something out and taking on its maintenance. While I was aware that you looked at v.pl in detail and even patched it to improve on its performance, it all seemed at most a sort of "trying it out", not at all taking over. Possibly the one crucial missing part that I think would have achieved more easily that move from "interested in this" to "maintaining this" is some discussion on this very topic (or possibly even better, on its future) with the active people otherwise involved, at least on their blogs if it was already too late for the forum to be the more natural avenue for such discussion.

This made complete sense on first read. But then I thought, if you trust the "spreading" property of the hash function then you don't need to squeeze out all those extra bits from the sponge, while if you don't then you can't count on comparisons terminating early. I can see it though as a sort of most conservative default. If the hash is good then a tighter one like 2*log2(line_count) - which for any real codebase would fit in a 64-bit word - should be just as good as far as any data-driven experiment can detect.

I'll conjecture that a (properly implemented) tree-based interning table would outperform Keccak at any length, while providing a hard guarantee on the performance bound. Though as far as trade-offs, I can imagine that in this application the Keccak code would be "free" and the tree algorithms perhaps not needed otherwise.

True, though it can't have been a very good mystery if so readily answered. Thanks for the clarification, indeed the case was that "task" meant something not quite apparent to me (an expanded set of operations beyond the basic two).

I'd guess the signature hash length (perhaps via that OAEP construction which I haven't quite grasped) is tunable, allowing the peculiar file size to be obtained.

Sig file names don't reference the signer; perhaps some key identifier is encoded inside as in the GPG format.

Either your OS mostly-but-not-fully sorts its directories and wildcard expansions (unlikely) or else "data/sigs/dialogs_v1.patch.sig" snuck into the listing out of order.

And upon transcribing the pixel grids back to text form I spotted a couple basename changes, presumably:

(2021_dialogs_v1 dialogs_v1)

(eucore_gui_c_v1 eucore_gui_v1)

(eucore_initial_base_layer eucore_initial_blayer)

(servercore_genesis score_genesis)

Though if filenames are insignificant to the processing, they could have been scrambled entirely.

Re "taking on the maintenance of v.pl" I indeed couldn't have described my intention as that. More just doing some particular overdue maintenance since it had come up in studying it, in hopes of making it usable for my needs at the time.

Trust but ...verify! In other words - yes, it's even expected that in practice a much shorter hash is absolutely enough although note that the trouble with very short hashes is that you increase the risk of collisions (and yes, the design is made so that VaMP recovers easily even from collisions but that does mean a re-run and if you end up with very frequent re-runs, the whole theoretical gain from not using longer hashes turns out into more of a nuisance in practice).

More importantly perhaps, since it's a more general matter of approach - when lacking either proof (of the mathematical sort) or a solid enough empirical basis to support some specific restriction, the best move is to build the tool as non-restrictive as possible. On one hand because it enables thus exactly the building of that previously missing empirical basis and on the other hand because there is nothing to lose with it - it's always easier to restrict at a later time than to... expand (not to mention that the expansion then will be out of necessity just another guess, basically oops, the first guess turned out wrong, will do another and hope it turns out better or... will do another!).

They could have but I didn't bother with it. Yeah, they could be called opjteiptu32q1p-953 (even without .sig at the end!) and it would still work fine for the machine (though I suspect the human user would not find it any listing all that helpful in such case).

Meanwhile the practice even neatly provided a concrete case when one actually *wants* a patch with the same simple name (aka the part without extension and otherwise as listed in the manifest file) while preferring a different extension to allow easy distinction, namely the case of what I'm calling now a ...shortcut patch! A shortcut patch is basically a regrind that either expands one patch into several (for instance as one digs deeper and separates larger changes into smaller ones with added comments en-route for easier digestion) or does the opposite aka collapses together in a single patch the total changes contained in a set of linked patches. In either case, VaMP is of invaluable help as it provides a *reliable way* to do this, meaning with hard, mathematical guarantees that the regrind is *exactly equivalent* - precisely helping the operator with what the machine does best, it's such a pleasure to have at hand.

On a side note, I'll add for my own future reference that the above use case does push, of course, that change I wanted to the tree visualization too, to finally have it correctly focused on the code rather than on how the changes happen to be packed at one time or another but I still have more pressing work elsewhere so it will still have to wait a while.

[...] there's nothing better than heavy, real-life use to test any new tool, my 2 weeks old VaMP got to handle already all the various small and large, light and heavy, straightforward and even [...]

[...] in the traditional Bitcoin Foundation Vpatch format, because until such time as I may reach a higher VaMP-y plane I still find it the best available option for publishing and discussing code with due consideration [...]

[...] there more as a historical accident then "design". [↩]Or its improvement. [↩]Or its improvement. [...]

[...] a directory1 as input, VaMP will loudly complain and promptly exit if it finds any invalid files or directories in it, meaning [...]

[...] up concretely that desired support for collaboration between code writers, readers and publishers, VaMP produces from now on automatically and as an integral part of its use, the html file containing the [...]

[...] done such regrinding previously to software from the 1970s and it enabled the development of very useful tools that pushed quite quickly for further developments and new connections that were simply not even [...]

[...] it all is that the solutions, when found, turn out to be so easy to implement currently, given the supporting environment and a very much cleaned client code that it really doesn't take long for something new [...]

[...] and the client is getting ever closer to release, I reviewed this matter again, especially as VaMP came into the picture as well. As a result, it turns out that there still is a place where I can't [...]

[...] there *wasn't* much else to do until now but from now on, there is, courtesy of VaMP: software editions rather than mere versions, offering cryptographic guarantees and embedded [...]

[...] publishing the code as a 1st edition of MiniZipLib, in VaMP format, of course, since it can be perhaps useful to others if/when they want to avoid depending on [...]

[...] VaMP gets put to use on projects others than my own, by hands other than my own, feedback comes, [...]

[...] people have come up with an improved paradigm and toolset for working with code as text, at some expense, and it would be most unfortunate to pass up the chance to use it, to continue [...]

[...] triggered this publishing of my key now rather than at a later time - if he's going to play with Vamp on the keys side, might as well give him something specific to play with/against, [...]

[...] used straight from the command line for communication matters. While up to now VaMP has been in daily use for years already, such use has been either through other code mostly, where the communications part is concerned, as [...]

[…] game. Eulora2 is implemented over a custom communications protocol and uses RSA keys in the VaMP format. That it's a game probably makes it the best entry point for learning and practicing the […]