Having given more time and more background thinking to the question in the title, I now have 3 answers of yet to be decided length (instead of the original ~500 words one) and I still like none! So I'm writing it all down already, since it turns out to be still at the expansion and exploration stage rather than the contraction and synthesis stage. First of all though and for any innocent readers that might happen one day, here's the question in its larger context:

Diana Coman: I was in fact looking at maybe trying out generating at least a few *types* of meshes as opposed to this single-mesh-for-everything approach. But it's a long road to explore and I'm not sure if it's burning /first in line given how many other things are to do - arguably and as a barely-formed/fuzzy idea for instance heads would be more reasonable starting with an egg-like shape (rather than a rounded cylinder) and deforming that based on/around some feature points. Not that it wasn't fun to get a proper implicit equation for eggs!

Mircea Popescu: I think it should start with the following single step : explain to me how they'd be different from one another ?

The first and easy answer to the above would be the shortest too: seeing how the whole business of this fractal deformation thing means precisely fuzzying clarity itself, fracturing previously whole dimensions and otherwise in general and in plain language making an unrecognizable mess out of any initial given thing, there can't possibly be any way in which the results of such activity depend on the shape that one starts with! After all, if you twist and deform something long and hard enough, what you get is still a pile of rubbish, regardless of what you started with. In which case the whole problem is solved, there's nothing to do here, we can move on and work on something else already. Wouldn't that be tempting, too?

The trouble with the above first and easy answer is that it's pure (and even sligthly bent) theory pushed to the extreme and not much to do with the practical matter at hand: first, the goal is precisely to make a *recognizable* mess of a mesh rather than a totally random mess (of a mesh) - even if, perhaps, barely recognizable rather than strongly so and even if recognizable by the implicit system that human perception uses rather than by an explicit set of rules; second, fractals may result in messy, seemingly random shapes indeed, but their randomness is just an illusion and an artefact of either limited space/time available or of our own limited perception of everything that the fractal can pack in such a tiny space. So the second and unhelpful answer is that in theory they need not be any different but in practice they will be (as the starting shape gives the space in which the fractal deformation works)!

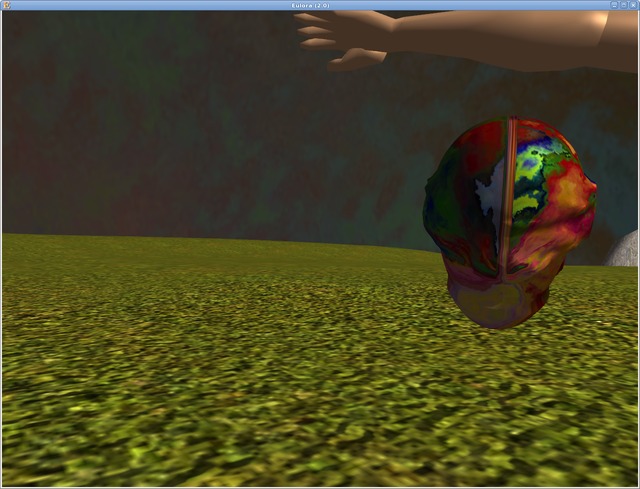

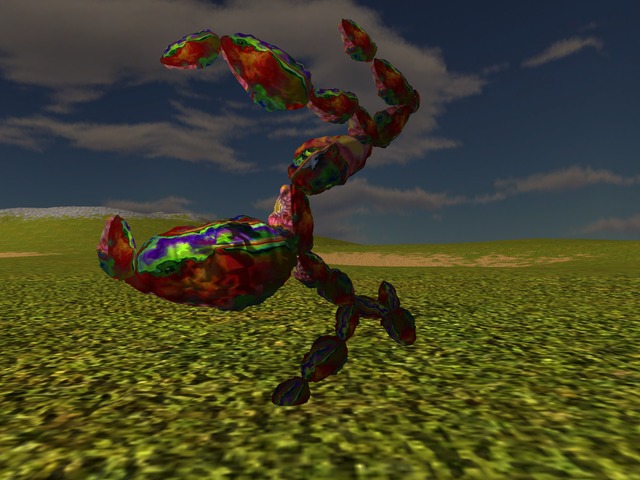

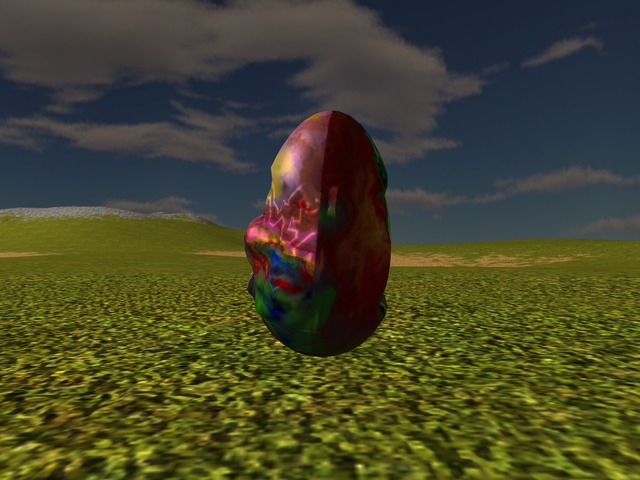

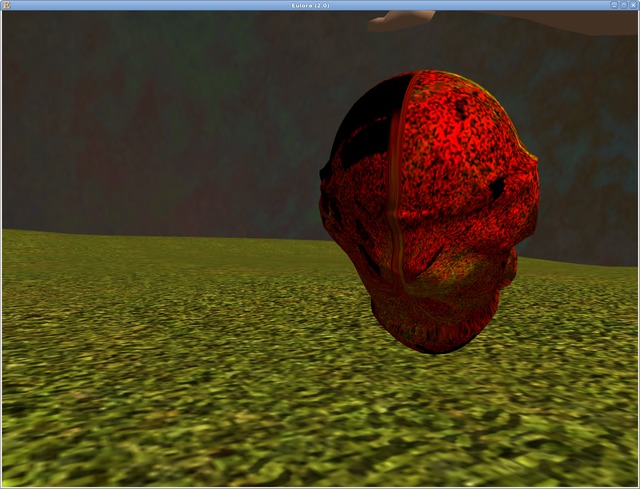

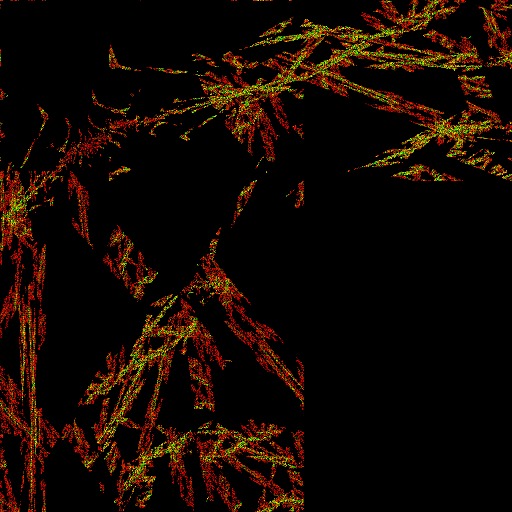

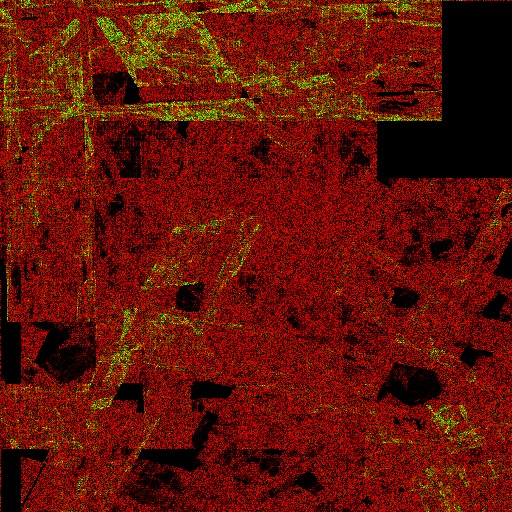

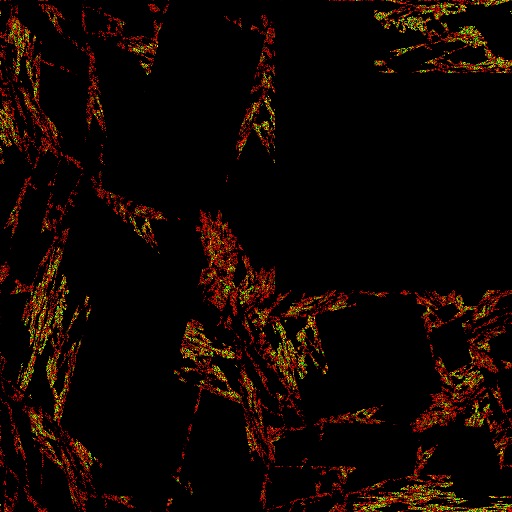

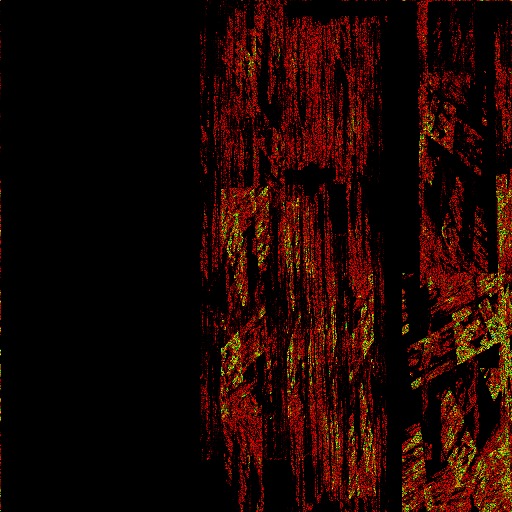

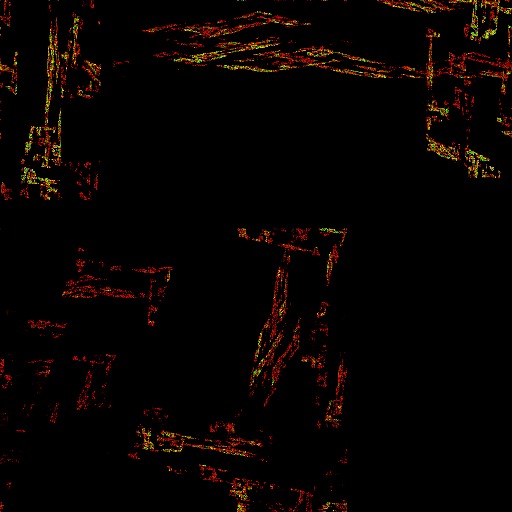

Finally, the third and very long answer says at core that they'd be different in style (in the way for instance swirly textures make a clearly distinct group from Escher-type textures and both are still different from the naive/surreal set), as the initial shape acts as a sort of starting point - while one can arguably get anywhere from any starting point, the sort of things one meets first are going to be different around one starting point as opposed to around a different starting point and moreover, the distance to travel and the difficulty in finding/following the road to the sort of thing desired will also depend as well on where one starts from. As illustration to what "different in style" might mean specifically, here's a few concrete pictures anyway as they can't hurt - although they are just a random pick really and they can't really say all that much of the whole story either (for all the popular pretense otherwise). For a simple thing that is perhaps not that obvious at first: note that the deformation of the cylinder shape resulted in stray parts - unconnected to the main, as a result of having to crank the noise up (and for an even clearer illustration of cranking the noise way too much up, see the first attempts at mesh deformation) quite a lot to get from a straight cylinder to something else, basically as an effect of that longer distance to travel; the egg-shape has no such trouble, as there is way less deformation applied (and even limited as to affected area) though with significant and visible effect:

On a superficial level (that can be easily shot down with "that's an artefact of the implementation/approach, damn it!"), the reason for there being a difference between starting with egg-shape vs starting with cyllinder shape in the first place is that the fractal mesh deformation effectively acts on a fuzzy region around the initial shape and is called upon to do at least 2 types of things: at the higher level, to morph the overall shape into one that is close enough to be recognizable as part of one specific group (e.g. "heads" or "limbs" - those do tend to have different proportions and overall shape); at the lower level, to create detail that fits that specific group's features (e.g. "eyes and mouth"). Having one single deformation do reasonably well both is theoretically possible, practically more likely a nightmare as it's quite unlikely that deformations making plausible eyes for instance are *also* helpful to inflate a straight cylinder into a plausible head-like shape. Certainly, there's also the route of applying several distinct deformations, basically one for the higher level shape and one for the lower-level features - the question in this case is whether it's worth the effort to do both. For one possible direction, the high level shape might even be better addressed at setting-on-bone time: perhaps the mesh should really remain just a surface and thus concerned with feature-level details, leaving the rest to be decided by the underlying bone on which the mesh is to be stretched (this would require that the bone has therefore at least 2, possibly 3 dimensions). Nevertheless, what having all those ultimately confusing rather than clear/clarifying options means is that so far the approach is not general enough to be fully independent of the starting shape - and perhaps that is an issue worth discussing first and in more detail anyway.

Before attempting though a discussion of the very approach itself and of choices regarding the mix of chaos and structure in mesh or texture generation, there are a few basic terms that I'll surely end up needing to remember in some years from now if I ever re-read this:

- Chaotic behaviour is the result of errors/noise overpowering the input/main signal. Any tame and otherwise very well behaved system that reacts deterministically and predictably to a known input signal can in principle become chaotic: all it takes is for errors/noise to accumulate at a pace greater than the system's own capacity to self-correct and/or identify the original signal as distinct; as soon as the original signal is drowned by errors/noise, the behaviour of the system becomes chaotic, with little prediction possible *in the short term*. The short term part is quite important here because it's really that the unpredictability, as to what the system might do immediately next, as it depends so much on just what random bit it interprets as signal, after all. Long-term though, at the limit (of infinite time), chaos itself is the tamest and most predictable of things, really (see below).

- Fractals are feedback systems and as such, their limits (aka what they tend to, given infinite time to run) are called attractors. The feedback nature of fractals means that they can accumulate and/or magnify errors very quickly indeed, basically turning into chaotic systems as per the definition above. And at the intersection of attractors with chaotic systems, there are some of the most interesting of attractors indeed, namely the unique attractors:

- Unique attractors define the shapes towards which chaotic systems reliably tend to, in the long term. In other words, regardless of the unpredictability of a chaotic system's *next* step, the final result of *all* those steps is itself entirely and reliably determined & predictable upfront (whether it's also *correctly* predicted or not depends on the knowledge of the one attempting to predict, not on the chaotic system that is already walking that shape anyway). All it takes for that determined outcome to be fully achieved is time - possibly an infinite amount of it, too (it's the result at the limit, what the fractal tends to).

Considering the goals outlined earlier (recognizable but diverse volumes/shapes/images), one way to look at mesh/texture generation is as an exercise in finding the right balance between - and even place for! - chaos (which brings the desired diversity) and structure (or order, if you prefer; at any rate, that part which ensures the result is still recognizable as part of one given group as opposed to just random noise). The exact way in which order is encoded as well as the concrete way in which chaos is brought in and harnessed make together for a significant decision that goes even more directly to the core of the matter than does the question in the title and trying to distill the answer to the latter kept pushing me towards grappling with this underlying decision and making it more clearly explicit. While I'm not quite sure I am fully there with it, the whole exercise certainly helped in various ways, so I'll go through what clarity I've got out of it, such as it is.

Contemplating this how-to-mix-chaos-with-order-for-pleasing-effect issue, one can be tempted to say that fractals already contain both chaos and structure, being thus in and by themselves the perfect and full solution: as chaotic systems, fractals provide short-term unpredictability (hence the desired diversity) AND long-term structure (aka the shapes defined by unique attractors, the limits towards which the fractal will tend). The trouble is that the practice is more limited than the theory: the structure as defined by the unique attractor is fixed indeed but this doesn't mean that it's either a recognizable structure or in any way known/easy to figure out upfront; moreover, banking on the structure that is the limit may mean that one needs to run the generator for a very long time indeed to get to the part where the chaos-order balance turns just right for the purpose at hand and stopping any earlier than that turns out rubbish and nothing more. Add also to this the fact that the whole theory assumes of course perfectly random noise as opposed to the fake sort that one can get on a computer - and funnily enough, the punishment for trying to sneak in more structure through non-fully-random noise is a postponement of reaching that desired limit of determinism and structure otherwise. In other words, there are a lot of potentially infinite roads to travel and very few signs (if any at all) to help you avoid at least those that take a long and unknown time to get in the end nowhere you have any interest to go to!

To avoid therefore the above death-like temptation of pure theory, there are only 2 main avenues left to still get both chaos and structure in without having to wait a lifetime to even find out if one got it wrong or possibly almost-right: one can use the short-term unpredictability of the fractal's chaotic nature to provide the desired variety, eschewing the potentially-infinite wait for having the fractal's structure manifest too - in which case the core structure has to come (or at the very least be augmented) from a different part that is external to the fractal itself; alternatively, one can use the long-term deterministic nature of the fractal's chaotic nature to provide the core structure - to the extent that this is known upfront and/or can be determined easily enough. Separate from this, one can of course further use fractals simply as a sort of efficient packers, basically as non-chaotic feedback systems - and I'd say this is by far the commonest type of use, too!

Almost all the attempts I made so far at both mesh and texture generation 1 use mainly the first option above: it's the fractal's short-term unpredictability that is called upon, as a relatively cheap -and controllable to a reasonable extent- way to provide pleasing variety. And the sort of error introduction into the fractal's feedback loop is quite carefully limited so as to give a sort of push towards chaotic system but not too much of it - as previously noted, too much of that and the result quickly turns to ...noise, unsurprisingly. Structure is injected through all sorts, from prng to the structure of noise in use (lattice for Perlin's, cells for Worley's) and to the functions used for domain warping or combination of fractal transformations. As noticed with the trig textures, the external structure thus provided can be perhaps dry but surprisingly natural-looking otherwise, if well chosen. The trouble is at that "well chosen" - basically this route has the pitfall of ending up forever tweaking various parameters in the search of the "well chosen" for a specific purpose. And it seems to me that this is how one ends up in the "sea of parameters" I mentioned previously (and that I tried to escape with the tidy up of my texture generator, only to find I merely got a bit of a better grip on it while still nevertheless fully submerged otherwise).

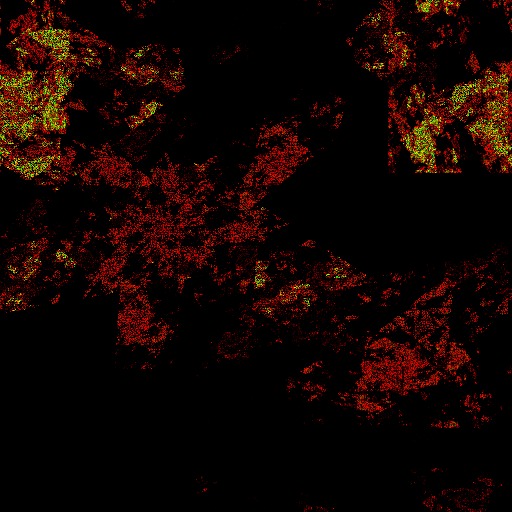

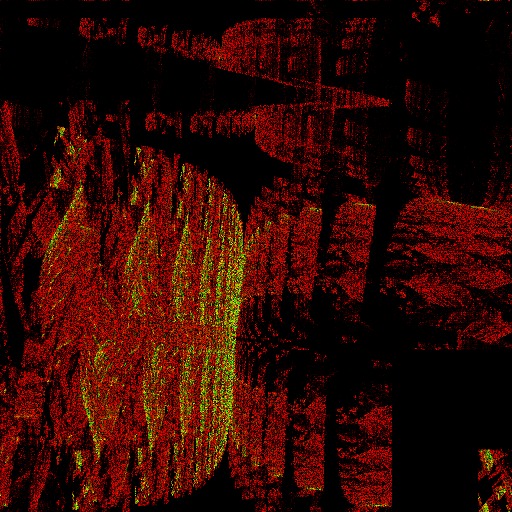

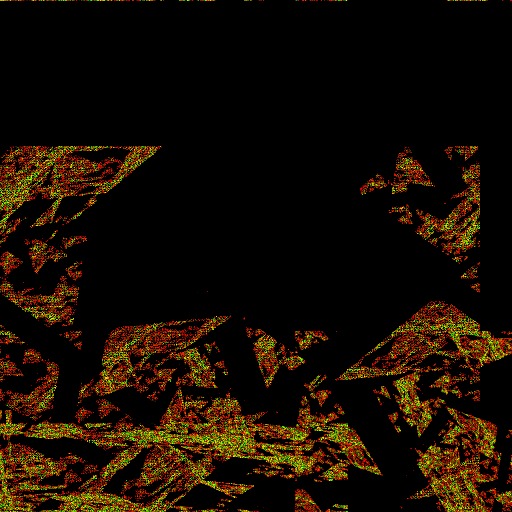

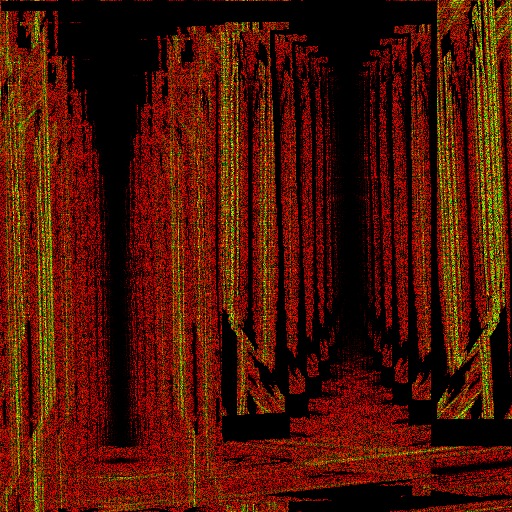

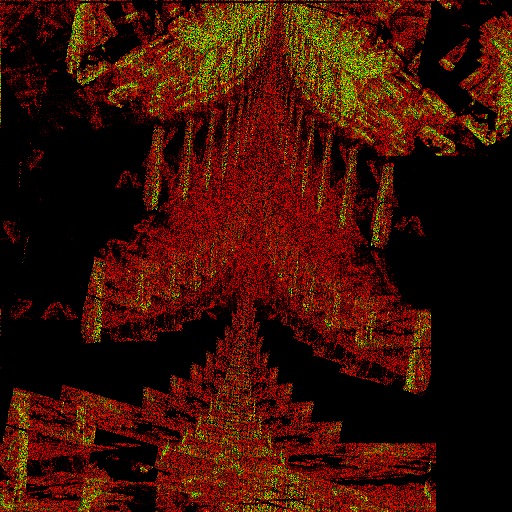

Does the above conclusion mean that one should therefore go instead for that second option of relying on the deterministic (at limit) behaviour of chaotic systems to provide the required structure (thus avoiding the sea of parameters and eternal tweaking within it)? It certainly makes for an appealing route (at least while submerged otherwise and fighting to keep track of the 1001 parameters of the other approach) but what would it even mean, right? Well, for textures at least, I had to get the answer and that meant not only an entirely new set of textures but... a new generator, too! At any rate, before going through the technical details, here's the first sample of outputs, a most basic sketch of what this approach might mean - it reminds me of caveman drawing style, for better (seems quite realistic really) and for worse (some are such awful scribbles and scratches!):

The above sample are but a few textures from what is essentially an entirely new set made with an entirely new generator too. The switch of the approach goes deep here, it's literally changing from evaluating the value of each pixel to drawing the image with repeated pixel-sized brush strokes that accumulate to different extents in different areas. A chaotic feedback system (an IFS - iterated functions system) is created pseudo-randomly (aka MT is used to pick all the required parameters within some carefully constrained intervals to ensure that there IS a unique attractor) and then iterated 90 million times 2 in the 2D space of the image. Each position records the number of times it was hit and then the colour of the corresponding pixel is simply calculated for now by multiplying the number of hits with 16 (I didn't experiment much with it, this made the pattern visible and so it was good enough for now, for this totally new thing). On the most attractive side, it's indeed, way, way simpler to generate this way as many textures as one might possibly want, pretty much in the way the skeleton generation goes - it's out of that sea of parameters to tweak with and into the more interesting place of finding the constraints that filter the sort of thing one is after. On the troublesome part, it's unclear to me if this approach can indeed produce *all* the different types of textures one might want. On the even more troublesome part for the topic of this article, it's even less clear to me if the same approach makes sense (or can even work directly with the current polygonizer, sheesh) for the mesh generation!

At the end of those 3k words, I think that far from answering the question all that clearly, I have added to it a bigger question as to the very core of the approach to explore in the first place. Ignoring that though and coming back to cylinder vs something-else, the best I can extract at the moment a sort of answer from all this exploration is that egg-shapes would have potentially better feature-like deformation (ie for making passable heads). More generally, starting with a shape that is close enough to that desired for a group of meshes (ie assuming that one *does* intend to create different meshes for types of body parts) allows the deformation to focus more closely on smaller details without having to become noisy just to achieve that too.

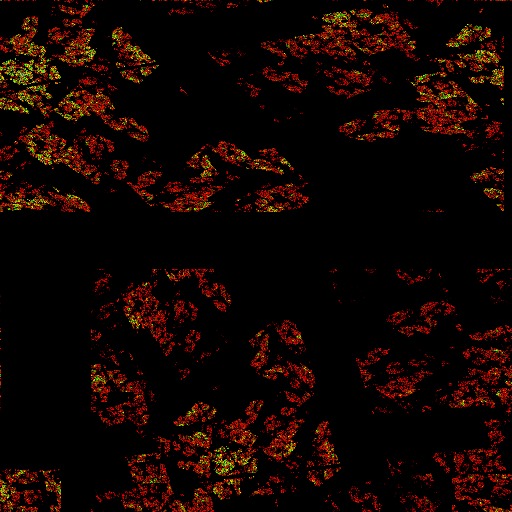

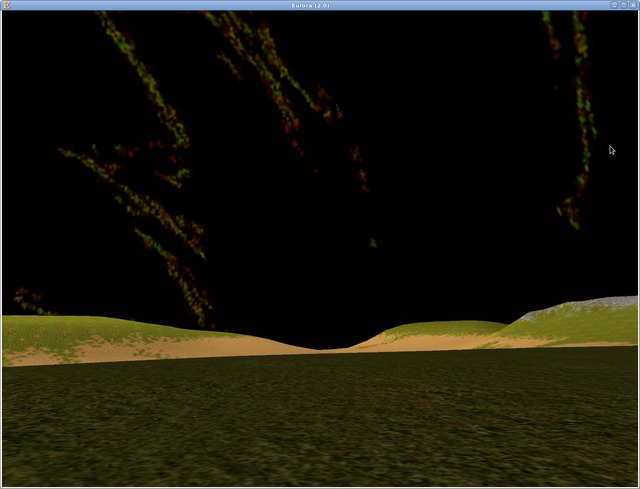

For an ending, here's one of the textures from the new set, used as a night-sky because why-not:

- The exception would be the pure Mandelbrot textures as that's exactly what the depiction there is - the shape defined by the unique attractor.[↩]

- In principle the number of required iterations can be reduced through a better choice of some of the parameters. Specifically, the IFS is made of a set of transformations with associated probabilities so that at each iteration, one transformation is picked with a biased die from the set and applied; the IFS that I generate contain a preset number of transformations (in the set illustrated here 4), where each transformation contains one rotation around the origin, one translation and one contraction in the 2D space; the contraction is the part that ensures the existence of a unique attractor; a better choice of probabilities can in principle help the IFS converge faster to the shape defined by its attractor. For my generator, the seed used for MT serves essentially to identify each unique attractor since all the other parameters are generated from the corresponding MT sequence - as it can be easily noticed even in this selected sample of images, some attractors have more interesting shapes than others, heh. For the record, there are ways to find the IFS that has as unique attractor a given shape - basically by going at it backwards, using rotated/translated/contracted versions of the shape to extract the corresponding parameters for the transformations and then picking probabilities to reduce the time needed for convergence. My point being exploration though rather than fitting to some given shape, I didn't implement any of that finding-specific-shape part.[↩]

Comments feed: RSS 2.0

Honestly, on a superficial level I don't at all understand what I'm seeing ; is it egg, egg, hopeful for no reason introduced, egg ? Wut ?

Rather, my problem is somewhat different.

So, as part and parcel of this exercise, we've disposed with the notion that "the game must have an orc" "because games have orcs" and "here's how orcs go".

I like this, but I also see no reason to stop. Why should "eyes" in the sentence "this game must have eyes because games have eyes and here's how eyes go" be priviledged over "orcs" ? What's the literal string "eyes" got that the literal string "orcs" doesn't, more e's ? And why should anyone care ?

I'm trying to build models that are interesting to interact with ; I don't specifically care if they have heads (with or without eyes upon them) anymore than I care if they "are" orcs. And so I'm not about to try and reconstruct traditional meaning out of ye olde kit of parts to be sewn together, "here's a head-like to go in the head-slot", it's too much work and if I wanted to do it I'd run a morgue not a game publisher.

It's perfectly true that the above "interesting to interact with" may well include "an artefact of either limited space/time available or of our own limited perception of everything that the fractal can pack" that'll be for simplicity called "head" -- but I am willing to let this be purely coincidental, rather than recherche an' belaboured.

I believe this is so.

I thought we were agreed indeed they-re bi, rather than monodimensional.

I'd say this is an excellent statement.

I don't think the textures illustrated are interesting for our purposes ; it's not necessarily the case the underlying generator is kaiboshed, but too little shape, color and variety in the finished product to interest us.

I find myself in a different position : this exploration proves to me that we must adjust our hopefuls generator for gen5, such that meshes are randomly chosen as either egg or cylinder for each bone (50-50).

I am absolutely NOT going to take a library of shapes, destructure them through this process, and then juggle the resulting pile of functionals and parameters, as I'm not looking to reconstruct google over here.

This is because it's not worth doing ; technically we could do it, of course, and cheaper and better than the pantsuit state agency of the same name ever managed or ever could have hoped to.

Hm, how is the first NOT a hopeful too? What, it's generated the same - I just fixed it to 1 point on sphere and one inside, heh. Why would a hopeful not have just one mesh, anyway. The point there was to illustrate the "type" of mesh, hence illustrated with different textures, on different bones, from different angles. I suppose I could have specifically said this in the text, it didn't occur to me it was needed.

Ah, this is good to know in clear - for some reason I didn't realise this went full way too (as initially there was some talk of noses and specific parts, so apparently I had that stuck in my head). Good to have cleared it out, it certainly makes the path clearer otherwise.

I can set it aside, the easiest of things to do. It's worth noting though that at the very least colour is a matter of colouring map applied, not all that much one of texture generation as such. Any texture generator will spit out in the end a value for each pixel. How that value is translated to colour can make quite a difference in the end - though I certainly don't feel the need to explore fully that part too at the moment just to see what can this generator get out. Other than this lack of time though, I'd say this is actually a more powerful generator than the other - though possibly for different requirements than textures have. Perhaps.

Eh, I thought it was "why that hopeful in there???" Lolz. At least it still convinced therefore that there is a difference between the two.

Aha. (And for the record, if I were to do that sort of library of shapes etc, I know already I'd loose interest in the whole thing in very short order.)

Ok but the transition's too brusque ; you don't explain what changed and I can't follow.

I'm not entirely against cheating if we find a workable cheat, "paste eyes like this" whatever. But it has to be cheap enough, I really wish to avoid building a car with hand-spun wheels.

I think I see what you mean there so I'll keep it in mind (on both matters really).

[...] alone for a few days and did instead all the writing of textures and then some more writing and different code and new textures and even various other code tidy ups before I sat down again to recalculate all the formulae (it's [...]

[...] http://ossasepia.com/2020/05/19/how-would-they-be-different-with-cavemans-drawings-by-computer/ << Ossa Sepia -- How Would They Be Different? With Caveman's Drawings by Computer [...]

[...] http://ossasepia.com/2020/05/19/how-would-they-be-different-with-cavemans-drawings-by-computer/ << Ossa Sepia Eulora -- How Would They Be Different? With Caveman's Drawings by Computer [...]

[...] rotation and then halving (hence: contraction) is precisely the sort of iteration required by an IFS (iterated functions system) to ensure a unique attractor. So yes, the chaos got trimmed to the bare minimum perhaps, what with [...]

[...] starting points. Going from the classic Mandelbrot fractal to Julia sets, from the signature rotate and shrink of chaotic systems to the clever diamond square and fm plus Perlin noise cheating shortcuts, there is always seemingly [...]