The best part about the old diff implementation from Unix's diffutils package1 is that it's visibly the result of a thinking process (as opposed to merely key-pressing) and otherwise relatively short by modern standards. I strongly suspect that both these qualities have nothing to do with the language of choice (C in this case) and rather all to do with the fact that at the time this code was written, people still *expected* rather implicitly that code will be read, not only blindly executed2.

Being thus a structured piece of code as opposed to a sprawling mess of monkey managers and other droppings, one reasonably expects to be able to follow a thread through it all and thus figure out in detail the exact way in which it all works. In practice, it turns out that this expectation holds for some parts3 but not quite for all. The place where it all rather screams of "everyone just gave up and wanted to be done with it already and good riddance" is, perhaps unsurprisingly, the code handling the writing of a diff patch/file. In other words, the messiest part is the communication of results in a form suitable for human consumption. Basically there are about 500 lines of code4 doing the "difficult" work of finding out the shortest edit script to change from one file to another and then there are *all the other lines of code* in a whole bunch of files, trying to handle somehow -anyhow!- the "easy" task of input/output so that the result of that work becomes indeed useful at all to a human user.

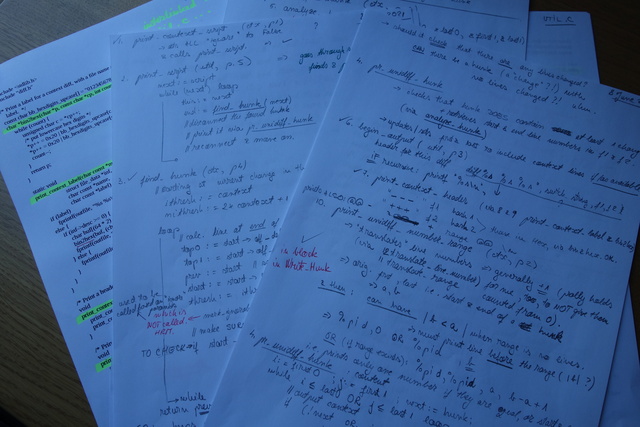

As a result and illustration of the above, the whole output of diff starts innocently enough with a simple call from analyze.c of print_context_script. That print_context_script is a method found in context.c and calling it launches one on a dizzying see-saw between context.c and util.c for no less than 15 calls even when the whole code is cleaned of all sorts and reduced to its most basic. So how exactly is one supposed to understand just what is output, how and when? Glad you asked - the only way that works is to follow it all, map it out clearly enough and then go through it line by line and word by word, adnotating and reviewing as many times as needed until all is clear indeed and without remainder. Since I did exactly this, given that it had to be done, here's the extracted map of calls (page numbers are based on my printing of the code so take them with a grain of salt), followed by a picture of how I even got to figure it all out quickly enough, anyway (since I've been told I never uploaded pictures of my hand writing, here's this lack fixed - have one with my writing and even my colouring, too):

- print_context_script (context.c, p1)

- print_script (util.c, p5)

- find_hunk (context.c, p4)

- pr_unidiff_hunk (context.c, p2)

- analyze_hunk (util.c, p8)

- begin_output (util.c, p3)

- print_context_header (context.c, p3)

- print_context_label (context.c, p1)

- bin2hex (context.c, p1)

- print_context_label (context.c, p1)

- print_context_header (context.c, p3)

- print_unidiff_number_range (context.c, p2)

- translate_range (util.c, p7)

- translate_lines_number (util.c, p7)

- translate_range (util.c, p7)

- print_1_line (util.c, p6)

- print_1_line_nl (util.c, p6)

- output_1_line (util.c, p7)

- print_1_line_nl (util.c, p6)

- print_script (util.c, p5)

This map is based on diffutils-2.8.1. ↩

For the wider context on this, see the whole Coding category on this blog. For just a starter article, see perhaps The Bugureaucracy. While there's a lot to be said about it all, to keep this footnote from exploding into a whole series of articles, I'll just note one tiny leaf of it all, namely that any "programming course" that focuses on code writing rather than code reading first and foremost is but a course in spamming, by another name. ↩

Mainly the cleanly mathematical ones as it were, with the neat result that it was a breeze to follow the implementation of the core algorithm of diff-ing aka Myers' algorithm. ↩

The diffseq.h file. ↩